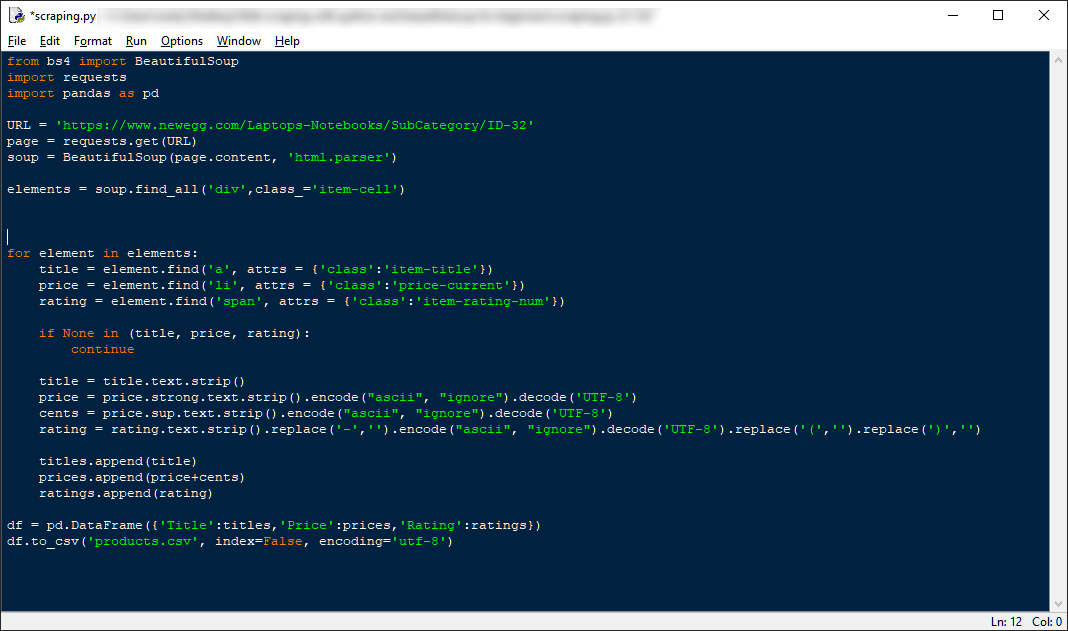

send_request ( url ) # Now uses scraping_nd_request()

# Add scraped data to "scraped_quotes" list # Loop through each quotes section and extract the quote and author Soup = BeautifulSoup ( html_response, "html.parser" ) Then to start including this in our QuotesToScrape scraper we just need to initialize it in our scraper. http_allow_list set which HTTP codes are considered successful responses.num_retries set the number of times the client will retry failed requests.num_concurrent_threads set the number of requests your scraper can make in parallel.job_name set a job name to be used my the ScrapeOps Monitoring SDK.spider_name set a spider name to be used my the ScrapeOps Monitoring SDK.scrapeops_monitoring_enabled will tell the client to monitor your scraper using the ScrapeOps Monitoring SDK.scrapeops_proxy_settings allows you to set the advanced functionality you would like to use from the ScrapeOps Proxy API,.scrapeops_proxy_enabled will tell the client to route your requests through the ScrapeOps proxy or not.scrapeops_api_key is your ScrapeOps API key that you can get here.You can add/change these variables, however, these are the input parameters we will define and why: Here we are creating the input parameters that we can use to configure how the ScrapingClient operates. Sends HTTP request and retries failed responses. Starts the ScrapeOps monitor, which ships logs to dashboard.Ĭonverts URL into ScrapeOps Proxy API URL num_concurrent_threads = num_concurrent_threads scrapeops_monitoring_enabled = scrapeops_monitoring_enabled scrapeops_proxy_enabled = scrapeops_proxy_enabled scrapeops_proxy_settings = scrapeops_proxy_settings scrapeops_requests import ScrapeOpsRequests Here is an outline of our ScrapingClient class:įrom scrapeops_python_requests. To keep our ScrapingClient clean and simple we will create it in a Class, and then integrate it into our scrapers when we want to move our scraper to production.

Structuring Our Universal Web Scraping Client Start ScrapeOps Monitor & Get HTTP Client.Structuring Our Universal Web Scraping Client.You can use this client yourself, or use it as a reference to build your own web scraping client.

This universal web scraping client will making dealing with retries, integrating proxies, monitoring your scrapers and sending concurrent requests much easier when moving a scraper to production. In this guide, we will look at how you can build a simple web scraping client/framework that you can use with all your Python scrapers to make them production ready. How To Build A Python Web Scraping Framework

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed